I was happy to see Barnes & Noble reporting digital books outselling physical ones on BN.com and Amazon announcing that the Kindle was its best-selling product ever because I hope this means much more e-reader content will become available.

I was happy to see Barnes & Noble reporting digital books outselling physical ones on BN.com and Amazon announcing that the Kindle was its best-selling product ever because I hope this means much more e-reader content will become available.

I’m always surprised and disappointed when a book I want to buy isn’t Kindle-ized. Then I’ll usually just forget about it; too bad, one sale lost.

If e-readers use is exploding, as B&N and Amazon want everyone to believe, then why isn’t every book, magazine and newspaper available in an e-reader form? Why are B&N and Amazon being so coy about releasing the type of numbers that would help publishers justify the investment?

Both B&N and Amazon have been trumpeting the number of devices sold, but this metric is meaningless, as industry watchers such as John Paczkowski at All Things Digital and Seth Fiegerman at MainStreet.com have pointed out. It’s really mysterious (or is it?) why B&N and Amazon haven’t been releasing information that would give a complete picture of the number of people who are using e-readers, the type of content they’re paying for, and the amount of content they’re buying.

Here are a few of the metrics I’d want to monitor to determine whether the audience is there to justify making e-readers a more significant part of an overall digital strategy.

Increased e-book sales will come either from current e-bookers buying more or more new e-bookers getting devices. Or both. Thus, I’d start with these two actionable key performance indicators, both ratios (not counts):

- Number of e-books sold per current registered e-book buyer

- Number of e-books sold per new registered e-book buyer

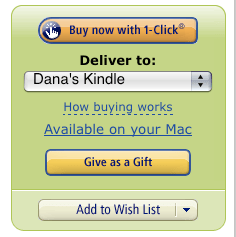

I’d focus on increasing the number of e-books bought by new e-book users, especially those who got an e-reader as gifts and thus didn’t necessarily choose to become e-readers themselves. (If you’re analytics-driven Amazon then you know this number because you’ve asked that question in the buying process.) E-readers seemed to be a popular Christmas gift in 2010; B&N sold more than 1 million e-books on Christmas day alone, according to MainStreet.com.

I’d focus on increasing the number of e-books bought by new e-book users, especially those who got an e-reader as gifts and thus didn’t necessarily choose to become e-readers themselves. (If you’re analytics-driven Amazon then you know this number because you’ve asked that question in the buying process.) E-readers seemed to be a popular Christmas gift in 2010; B&N sold more than 1 million e-books on Christmas day alone, according to MainStreet.com.

Without knowing more than this one measly number, I’m not convinced e-books are booming. Think about it. You get a Nook for Christmas, you try it out and buy a book while the generous gift giver is right there, smiling at you and saying “Isn’t it great?” Then you go on to the next present or Christmas dinner or talking to your cousin or whatever.

But how many new e-readers continued to buy e-books past the first one? (Bounce rate – one of the greatest metrics of all-time.) If they only bought one, was it because the experience was confusing? Did they just not like the e-book experience? Did they not find the content they wanted? Were they dismayed to find their favorite magazine or newspaper only puts puts part of its content in an e-reader format? (WHY do publishers do this? Oh yeah, cannibalization. Yeah, I’m going to go out and buy that print thing right now.)

But how many new e-readers continued to buy e-books past the first one? (Bounce rate – one of the greatest metrics of all-time.) If they only bought one, was it because the experience was confusing? Did they just not like the e-book experience? Did they not find the content they wanted? Were they dismayed to find their favorite magazine or newspaper only puts puts part of its content in an e-reader format? (WHY do publishers do this? Oh yeah, cannibalization. Yeah, I’m going to go out and buy that print thing right now.)

If there’s a significant drop in e-book sales from new users then you can dig deep into data that will indicate the specific actions you should take, like improving the buying process, offering incentives to one-e-book buyers in exchange for info on why they don’t buy more, and adding the content people are willing to pay for.

Because e-books are sold, there’s a treasure trove of demographic and behavioral audience data collected from the purchase process, data that gives all kinds of actionable insights about what kind of content is worth offering in an e-reader form, e.g., number of e-books sold by type (book, magazine, newspaper, etc.), category/topic, fiction/nonfiction, author, new/old.

One million e-books sold in a day? Tantalizing. Let’s see more data.